A Unified Framework for LLM-based ReLabel Method

A Unified Framework for LLM-based ReLabel Method

Abstract

In industry deep learning application, our dataset has a certain number of noisy data. The init datasets are from human labeling or LLM (large language model) generation or user behavior log. To achieve over 90% accuracy on the dev and test datasets, we propose a framework that identifies noisy and badcase data, relabels it using a LLM, and constrains the relabeling task to a binary classification problem. In this paper, we illustrate our idea for a broad set of deep learning tasks, includes classification, sequence tagging, object detection, sequence generation, click-through rate prediction. The dev dataset evaluation results and human evaluation results verify our idea.

Keywords

NLP, LLM

1. Introduction

In recent years, deep learning \cite{ref1} and LLM \cite{ref2,ref3,ref4,ref5,ref7,ref8,ref9,ref10} have shown significant improvement on natural language processing(NLP), computer vision and speech processing technologies. However, the model performance is limited by the dataset quality. The main reason is that the dataset has a certain number of noisy and badcase data. In this paper, we present a framework to find the noisy and badcase data and relabel it for NLP tasks. In this paper, ‘NLP’ refers to a specific NLP task, such as NER, text classification, specific text generation, etc.. Specifically, we define ‘NLP tasks’ as those that can be solved by the ‘data-cover’ paradigm. ‘LLM tasks’, on the other hand, refer to the paradigm that relies on trillion-token pre-training and million-token post-training data. Our idea can apply to a broad set of deep learning industry applications.

This paper’s contribution lies in the demonstration that the quality of a training dataset can be enhanced by first generating it with LLM and subsequently using LLM for re-annotation, without the need for manual re-annotation. Due to the inherent instability of LLM-based annotation, the method proposed in this paper also becomes a necessity.

2. Method

2.1 Initial Datasets

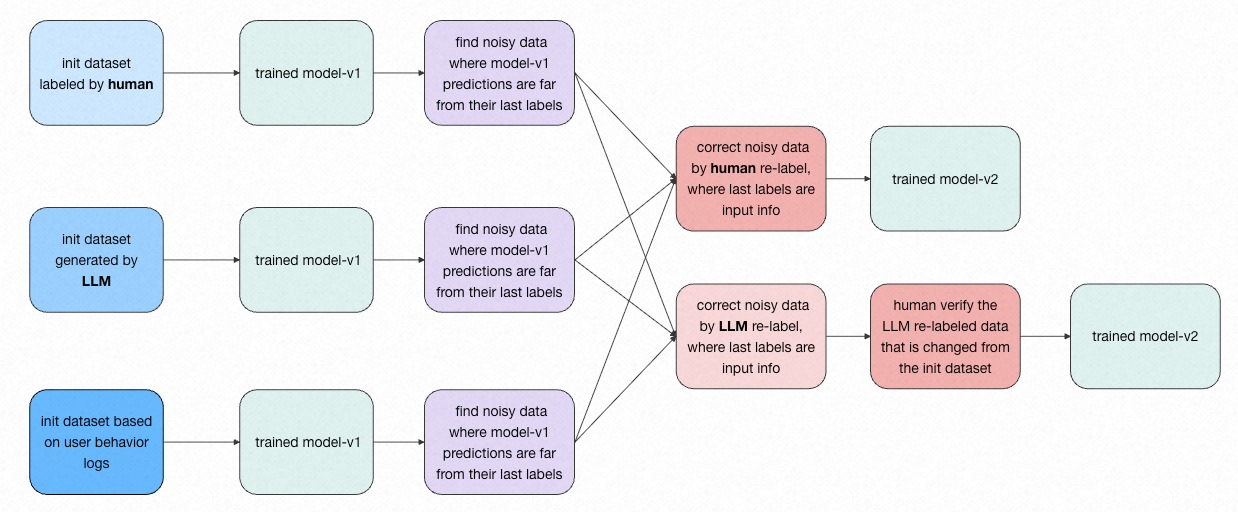

Our initial datasets can be sourced from the following three methods:

1) Manual Annotation: Data noise in a manually annotated dataset, using a classification task as an example, occurs when there is disagreement among annotators. For instance, for 3 very similar data to-label, 2 annotators assign label-A, while 1 annotator assigns label-B.

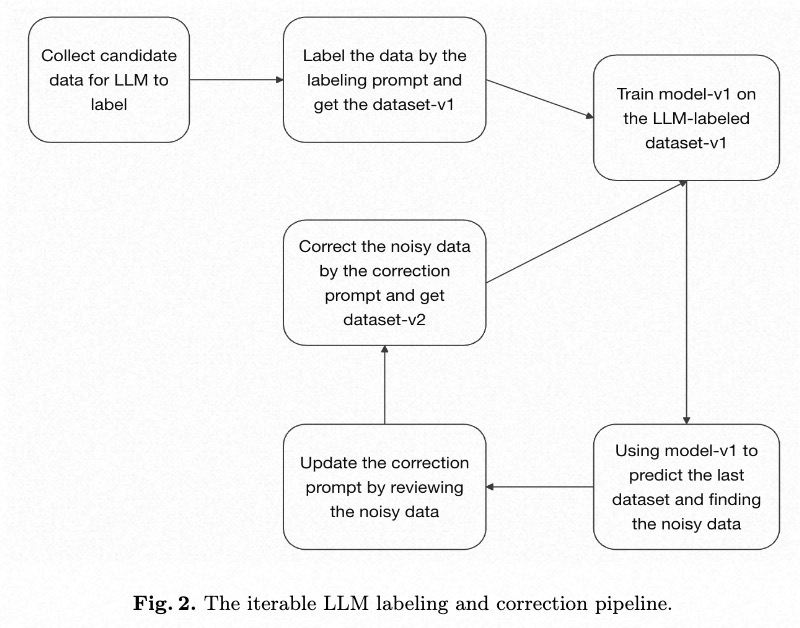

2) LLM Generation: For datasets generated by LLM, data noise in a classification task often stems from overlapping or repetitive definitions for labels within the prompts. Before generating an initial training dataset using an LLM, it is crucial to first prepare a human-annotated, real-world test dataset. This test dataset should then be used to debug and refine the prompts, ensuring they are fully optimized to maximize the overall quality of the LLM’s annotations. Regarding data generation by LLMs, it is not always necessary to generate from scratch. By collecting logs from actual production use, we can extract a candidate dataset for annotation that is representative of real-world scenarios.

3) User Behavior Logs: Datasets based on user behavior logs are constructed from user actions. For example, in an e-commerce scenario, a dataset can be built based on whether a user clicks on an item or places an order.

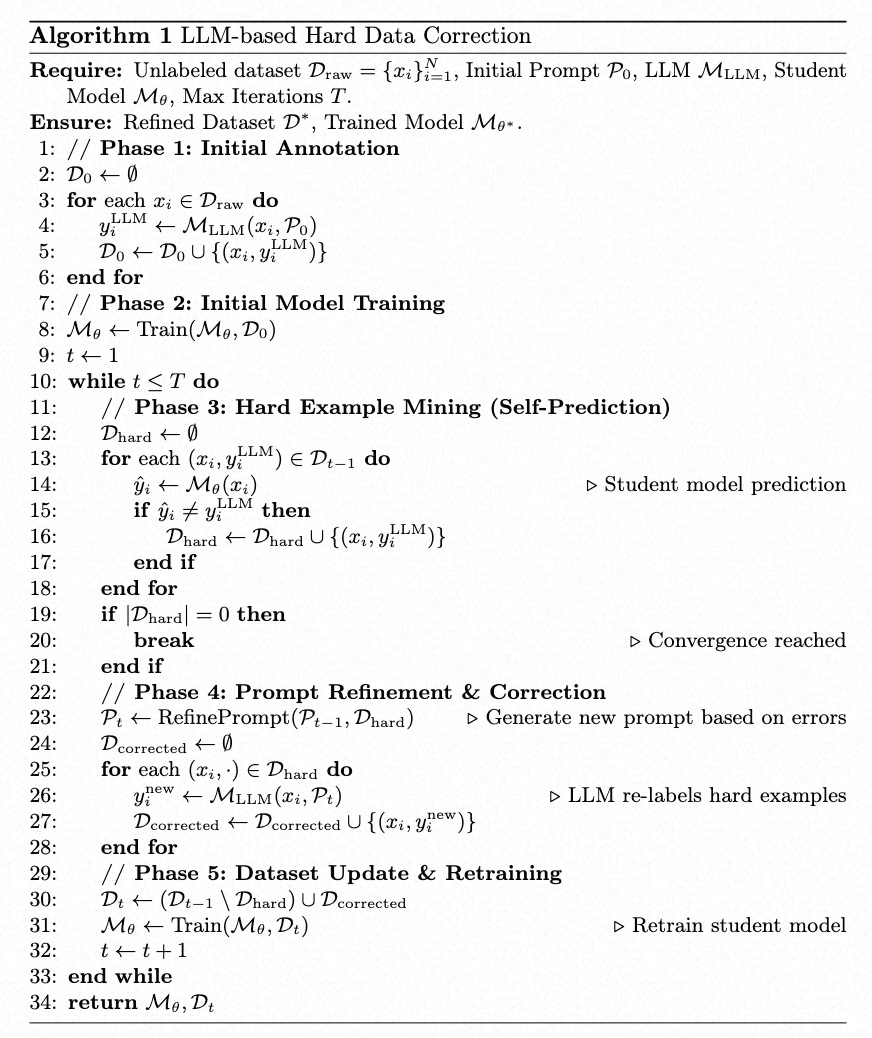

2.2 Find Noisy Data And Relabel

In this paper, we define noisy data as ambiguous data; for instance, when three highly similar data are labeled as ‘label-A’ for two and ‘label-B’ for the remaining one. We first train a model on the initial dataset. Specifically, we choose the model from the point where the dev loss no longer decreases, using it as our model for self-prediction. Therefore, we use this model to generate predictions for the entire training and dev dataset. The data where the model’s prediction differs from the original ground-truth label, or where the prediction error is large, are identified as potential noise/badcases. This method allows us to find approximately 2-10% of the dataset for re-annotation. This approach not only reduces manual annotation costs, but its effectiveness in identifying noisy data has also been validated by our experimental results.

We perform a manual re-annotation of the noisy data. During this process, we provide the human annotators with both the original label and the model’s prediction as input information. In the era of LLM, we are now replacing this manual re-annotation with an automated process using an LLM. Similarly, we feed the LLM the same inputs: the original label and the model’s prediction. In detail, we ask the LLM within the prompt to correct noisy data made in the last round of labeling. We require the LLM’s error correction output to be chosen from either the result of our trained model or the result from the previous annotation \cite{ref6}. For example, in a 10-class text classification task, the correction step for the LLM is simplified to a 2-class classification problem, where the candidates are just 2 labels: the previously annotation and the one predicted by the trained model. To be specific, in the prompt we use for LLM annotation during the correction step, we only provide the definitions and examples for the candidate labels, and do not include the definitions and examples for the other labels in the prompt.

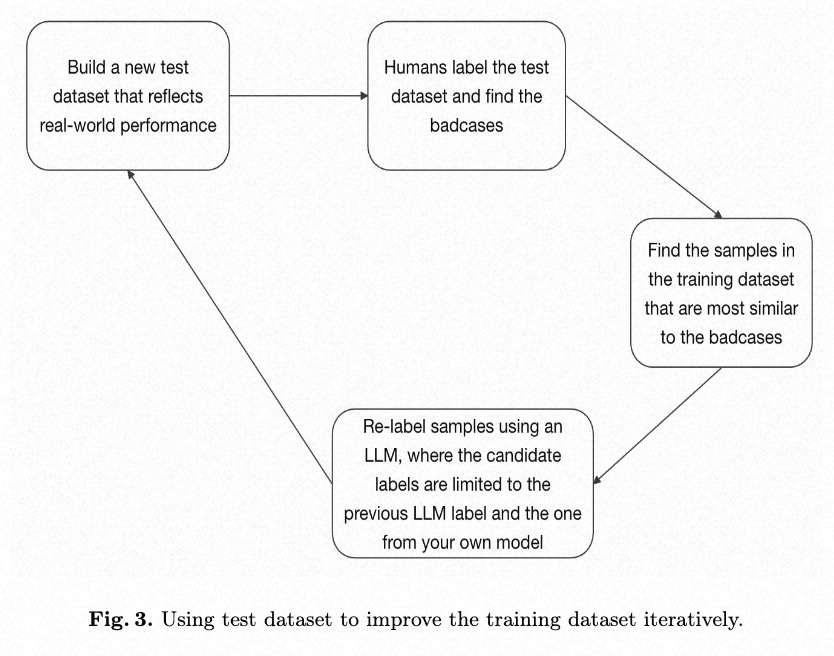

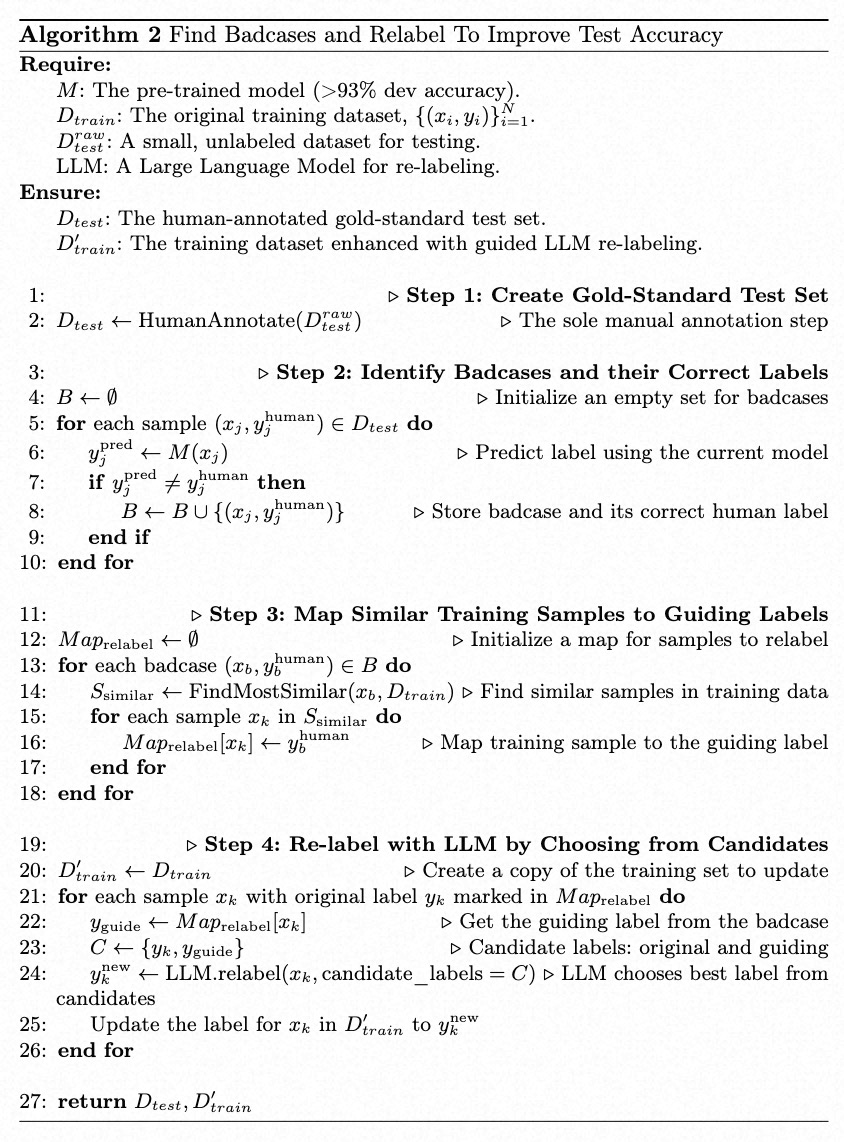

2.3 Find Badcases And Relabel

Once we achieved over 93% dev accuracy using self-predict and LLM re-labeling, we need to build a test dataset reflecting real-world performance. This requires manual annotation by a human-in-the-loop. Next, we identify badcases in the test dataset where our model’s predictions disagree with the human labels. We use these badcases to find the most similar samples in the training dataset. These found samples are then re-labeled by an LLM. During the LLM relabeling process, we instruct the LLM via the prompt to treat the human-annotated label from the test dataset and the last label as the candidate labels. Executing this step once may not be sufficient to improve the accuracy to, for example, over 90%. This step should be iterated using the remaining badcases, while adjusting the thresholds or methods for similarity searching, until the percentage of remaining badcases drops below a certain threshold, such as 10%.

We do still need to manually annotate the test dataset. However, the dataset is small in size, and more importantly, this is the only step in our entire workflow that requires human annotation. This test dataset is crucial as it allows us to definitively determine our model’s performance by providing a benchmark that reflects real-world performance.

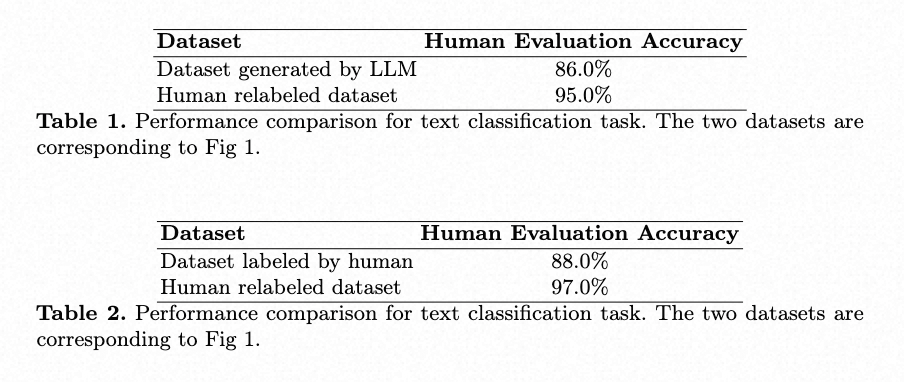

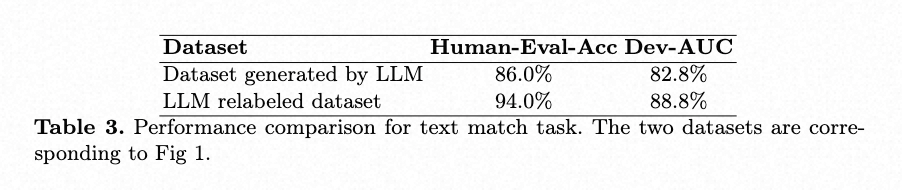

3. Experimental Results

For clarification, the human evaluation process involves presenting the model’s outputs on real-world data to human annotators. These annotators are then tasked with assessing the correctness of each prediction, determining if it is right or wrong.

The labels in the dev dataset are changed during the re-labeling process, while the test dataset remains unchanged.

4. Discussion

The key advantage of prompt-based data annotation is its efficiency in batch processing. By including a few examples (few-shot learning) in the prompt for a LLM, the LLM can generalize and apply the annotation logic to an entire batch of data. Therefore, LLMs bring the amount of data labeling down to a quantity that is manageable for a single developer. For the relabeling step, the prompt-based LLM can be seen as a batch annotation/correction tool. Humans write few-shot examples into the prompts to correct noise in the training dataset. Although LLMs are considered a tool for batch annotation, we’ve found in practice that providing a large number of showcases (examples) is not very effective. By examining the LLM’s reasoning process, we observed that it can utilize a maximum of 1-5 showcases, even when we provide 20-30. Unlike humans, an LLM cannot automatically induce the underlying rules from a large set of showcases and then perform annotation. Consequently, batch-fixing specific badcases must be achieved through other methods of prompt optimization.

4.1 Discussion For Dataset Size

Since our noise correction method relies on the statistics of the training data itself, the amount of training data should be in the millions, rather than tens of thousands.

4.2 Discussion For Noisy Data Relabel

We find noisy data by contrasting original labels with model predictions. To correct noisy labels, LLM can be employed to relabel data, thereby reducing the scope of manual annotation. In the LLM relabeling step, our visual inspection reveals that, when correcting noisy data in binary classification tasks, LLMs indeed correctly resolve the majority of ambiguous data.

4.3 Discussion For Badcase Relabel

A method that attempts to selectively improve accuracy on the test dataset by changing the labels in the training dataset is highly inefficient, and may even be considered a trick, because it only targets better performance on this specific test dataset. This approach is for reference only.

4.4 Other Discussion

Why not convert all data annotations into a binary classification task for a second round of relabeling? The proposed method was:

For data where the LLM and our trained small model agreed, the candidate labels would be the LLM’s label and the small model’s second-best prediction. For data where they disagreed, the candidates would be the LLM’s label and the small model’s label.

We experimented with this approach but found that for some simple samples, this relabeling annotation process actually reduced the accuracy of the resulting labels. Ultimately, we decided against implementing this comprehensive binary re-annotation across the entire dataset.

5. Conclusion

In the era of LLM, our goal is to train models for NLP tasks. To correct the noise in our initial dataset, we propose a framework that supports both a human-in-the-loop (HITL) and an LLM-in-the-loop (LITL) approach. Experimental results have validated the effectiveness of our method. Our idea can apply to a broad set of deep learning industry applications.

Our ultimate goal is to automatically ensure all data is right and to achieve a 100% accurate training dataset for any specific task without human labeling.

Reference

\bibitem{ref1}

Krizhevsky A, Sutskever I, Hinton G E. Imagenet classification with deep convolutional neural networks[J]. Advances in neural information processing systems, 2012, 25: 1097-1105.

\bibitem{ref2}

Achiam J, Adler S, Agarwal S, et al. Gpt-4 technical report[J]. arXiv preprint arXiv:2303.08774, 2023.

\bibitem{ref3}

Radford A. Improving language understanding by generative pre-training[J]. 2018.

\bibitem{ref4}

Ouyang L, Wu J, Jiang X, et al. Training language models to follow instructions with human feedback[J]. Advances in neural information processing systems, 2022, 35: 27730-27744.

\bibitem{ref5}

Raffel C, Shazeer N, Roberts A, et al. Exploring the limits of transfer learning with a unified text-to-text transformer[J]. Journal of machine learning research, 2020, 21(140): 1-67.

\bibitem{ref6}

Tong Guo. Automatic Label Error Correction. TechRxiv. March 12, 2025.

\bibitem{ref7}

Yang A, Li A, Yang B, et al. Qwen3 technical report[J]. arXiv preprint arXiv:2505.09388, 2025.

\bibitem{ref8}

Liu A, Feng B, Xue B, et al. Deepseek-v3 technical report[J]. arXiv preprint arXiv:2412.19437, 2024.

\bibitem{ref9}

Guo D, Yang D, Zhang H, et al. DeepSeek-R1 incentivizes reasoning in LLMs through reinforcement learning[J]. Nature, 2025, 645(8081): 633-638.

\bibitem{ref10}

Shao Z, Wang P, Zhu Q, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models[J]. arXiv preprint arXiv:2402.03300, 2024.